Daily scanning operates under the outdated assumption that there’s ample time between vulnerability discovery and exploitation. However, threat actors now weaponize newly discovered vulnerabilities within hours of publication. When scanning runs once per day, systems remain exposed for hours or an entire day before the next scheduled scan detects emerging issues, leaving critical security gaps.

Organizations implementing preemptive exposure management programs are three times less likely to suffer breaches, making Attack Surface Management tool selection a critical security investment for 2026.

Key Takeaways

Security leaders must adapt their attack surface management strategies as threat actors now weaponize vulnerabilities within hours of discovery, making traditional daily scanning obsolete.

- Hourly scanning is now mandatory – Daily vulnerability scans leave systems exposed for 24+ hours while attackers exploit new vulnerabilities within hours of publication.

- Verified exposures beat theoretical scores – Focus on vulnerabilities with working proof-of-concept rather than chasing high CVSS scores that may never be exploited.

- Threat intelligence drives smart prioritization – Use dark web intelligence and active campaign data to prioritize real attacker targets over theoretical vulnerability severity.

- Continuous discovery prevents blind spots – 30 percent of large businesses see less than 75 percent of their assets; automated discovery workflows capture ephemeral cloud workloads and shadow IT.

- Integration capabilities determine success – Choose ASM tools that seamlessly connect with existing SIEM, SOAR, and vulnerability management systems for automated remediation workflows.

The shift from reactive to preemptive security requires tools that eliminate exposure before exploitation occurs. Attack surface management tools must evolve in response to a critical change: threat actors now weaponize newly found vulnerabilities within hours of publication. The traditional daily scanning approach is no longer sufficient in light of these collapsed exploitation timelines. Organizations that arrange their security investments around exposure management programs are three times less likely to suffer a breach. This piece explores the features that define market-leading ASM solutions in 2026. We’ll examine how to combine threat intelligence into your attack surface management program and the criteria security leaders should use when evaluating vendors.

The Current State of the Attack Surface Management Market

Market growth and adoption trends

The attack surface management market reached $1.03 billion in 2025 and projects to $1.25 billion in 2026, with forecasts showing expansion to $5 billion by 2034 at a compound annual growth rate of 21.03% [1]. North America dominates this market with a 34.97% share [1], while cloud-based deployment models captured 67.4% of revenue in 2023 [2]. This acceleration reflects a recognition: organizations can no longer afford the blind spots in their digital infrastructure.

The numbers paint a picture of visibility gaps. Almost 30% of large businesses see less than 75% of their assets [3] and create major exposure to threats they cannot address. Organizations with larger attack surfaces face nearly double the risk of multiple cyberattacks [1]. This matters because 70% have experienced at least one breach originating from an unknown, unmanaged, or poorly managed internet-facing asset [1].

Cybersecurity budgets mirror this urgency. Global security spending grew from $213 billion in 2025 to an estimated $240 billion in 2026, representing a 12-13% increase [4]. Many CISOs acknowledge that even rising budgets may fall short of managing changing risks and reflect a gap between funding and the scale of threats organizations face [4].

Key drivers shaping ASM demand in 2026

Digital transformation initiatives, cloud adoption, and remote work models have created attack surfaces vulnerable to different cyber threats. Cyberattacks affected over 343 million people in 2023 alone [1]. Data breaches surged 72% between 2021 and 2023 and surpassed previous records [1]. These statistics underscore why 43% of IT and business leaders believe the attack surface is growing out of control, with 73% expressing concern about their digital attack surface size [1].

The rise of generative AI introduces dual pressures. Organizations invest heavily in AI and automation tools while grappling with shadow AI risks and potential exposure of sensitive data to insecure systems. Attack surface management tools merge with Extended Detection and Response capabilities to provide unified security visibility and reduce tool sprawl while improving threat identification.

Regulatory frameworks such as the UK Cyber Security and Resilience Bill and EU Cyber Resilience Act place expectations on organizations to manage exposure across digital environments [4]. Regulated sectors including healthcare, finance, and manufacturing find that reactive security practices prove insufficient and potentially non-compliant.

The move from reactive to preemptive security

We’ve optimized cybersecurity for response metrics: mean time to detect, mean time to respond, mean time to contain. Every metric assumes the attack has already begun. The next development focuses not on responding faster but preventing attacks before they succeed.

Preemptive cybersecurity eliminates exposure before exploitation occurs. This approach identifies emerging threats early and predicts which threats will materialize. It determines where threats intersect with internal exposures and remediates those exposures before adversaries exploit them [5]. Attack path mapping explores how attackers use vulnerabilities to move from initial access to their goals and helps security teams focus resources on important threats rather than false positives.

Traditional vulnerability scanners identify all vulnerabilities as potentially exploitable, yet only a small percentage prove exploitable. An even smaller number have been exploited in the wild. Exposure management solutions verify whether vulnerabilities are exploitable and enable teams to prioritize remediation based on ground business impact. This move reduces mean time to remediation by automating and orchestrating the remediation process. It contracts the window in which attackers could exploit vulnerabilities.

Critical ASM Features That Define Market Leaders

Hourly scanning versus daily monitoring

Daily scans operate under an obsolete assumption: ample buffer time exists between vulnerability discovery and exploitation. This window has collapsed. Systems remain exposed for hours or an entire day before the next scheduled scan detects emerging issues when scanning runs once per day. Hourly scanning detects vulnerabilities as they emerge rather than the following day. Threats evolve by the hour, not by the day. Continuous monitoring provides immediate visibility into every exposed asset, misconfigured service, and shadow IT risk as it emerges. Attack surface management tools operating in environments where dev or ops changes occur constantly need this frequency.

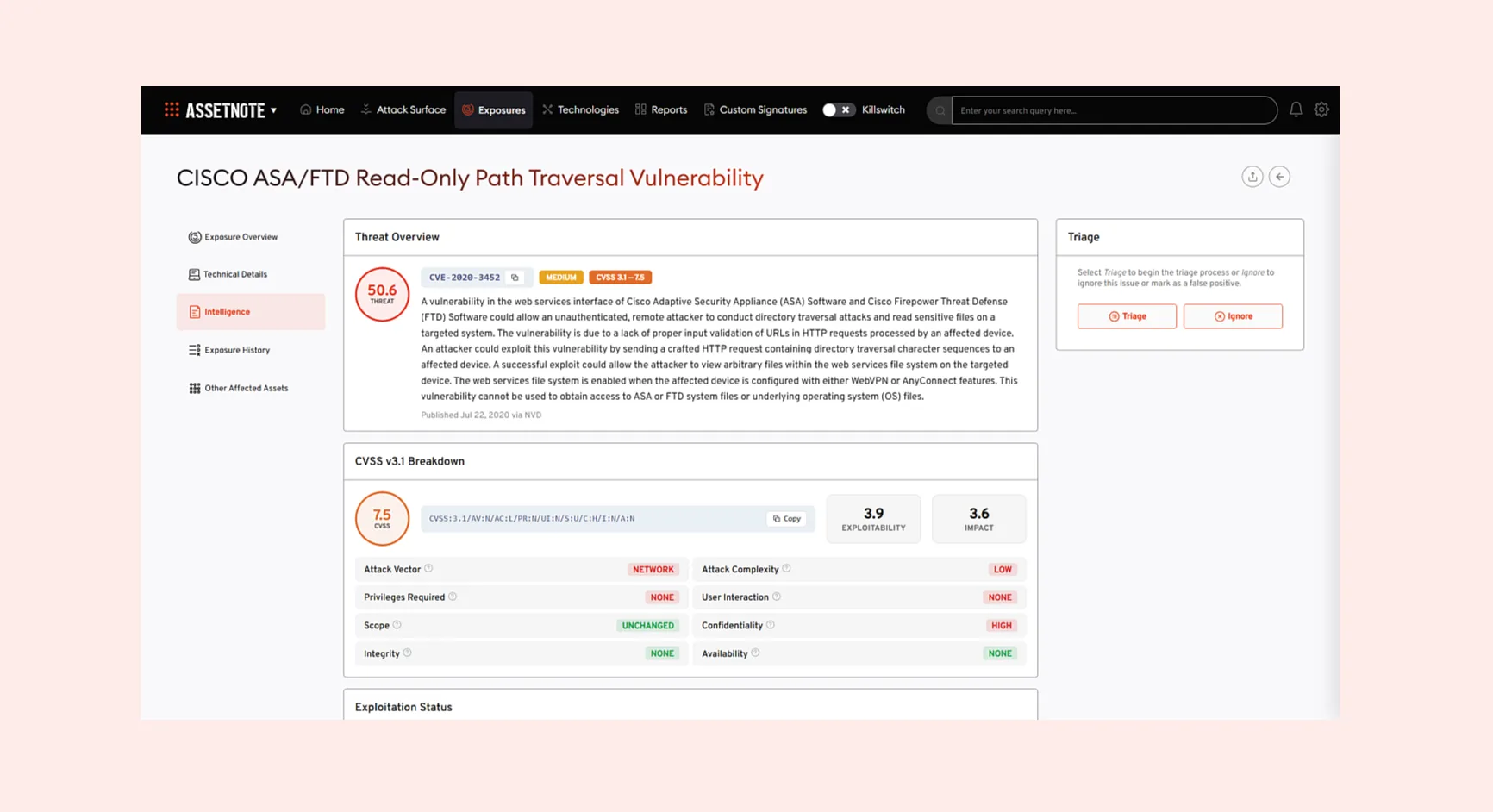

Verified exposures with working proof-of-concept

Exposure discoveries require verification. Leading platforms provide exact proof-of-concept demonstrations so security analysts can replicate findings themselves. Security teams avoid wasting time checking if findings represent false positives when they have the method to replicate. Each verified exposure comes with proof-of-concept that can be tested, replicated, and closed before attackers act. This verification method achieves two outcomes. First, it reduces false positives dramatically and prioritizes only exposures that can be acted upon. Second, it arranges remediation efforts with real risk and ensures resources are spent where they will have the most effect. Programmatic techniques developed through original research verify vulnerabilities.

Novel security research and early vulnerability alerts

Security research teams identify exploitable vulnerabilities in popular third-party software. Corresponding vulnerability checks are added to platforms so customers receive notifications much earlier upon disclosure to vendors. Research analyzing hundreds of malicious activity spikes targeting edge technologies revealed that 80% of spikes were followed by new CVE disclosure within six weeks. 50% were followed by CVE disclosure within three weeks [5]. These patterns appeared exclusively in enterprise edge technologies like VPNs and firewalls. This intelligence gives security leaders weeks to harden defenses and prepare strategic responses before CVE publication.

Low-noise, high-signal alerting systems

Alert fatigue caused by irrelevant notifications creates a signal-to-noise problem that hurts performance. Teams lose time chasing false leads while real threats slip by unnoticed. Teams receive fewer distractions and more useful information when alerts are driven by high-confidence data and focused only on verified threats. There is no clutter, no over-alerting, and no wasted effort. Attack surface management programs require this approach as standard practice.

Detailed asset discovery across cloud and third-party infrastructure

Cloud environments present unique challenges. Instances and services can be created and decommissioned instantly. This leads to unmanaged instances that asset management tools may not track. Continuous monitoring keeps pace with the dynamic nature of cloud environments and captures new instances and changes quickly. Discovery methods combine API integrations, network scans, and agents to cover cloud, on-premises, hardware, and software. Each asset is enriched and monitored for potential risk, with automated reconnaissance techniques across web and mobile channels.

Threat Intelligence as an ASM Prioritization Layer

The gap between exploitability and actual targeting

CVSS scores measure theoretical severity, not actual risk. A CVSS 9.8 vulnerability that threat actors never exploit presents less risk than a CVSS 6.5 actively used in ransomware campaigns [5]. Most security programs still chase the highest scores first. They waste time while leaving actual threats exposed. Severity does not necessarily equal risk.

Answering whether a CVE is exploitable in their specific environment requires days of investigation for most organizations or waiting for the next pentest cycle [7]. Security programs lack validation, not vulnerability data. Scanners report theoretical vulnerabilities in isolation. They don’t confirm reachability, business logic context, data flow conditions, or end-to-end exploitability. Traditional pentest programs cannot keep pace with code velocity. Code changes and dependencies change while exposure evolves by the time testing begins.

Attackers think in attack paths, not individual vulnerabilities. A single low-severity misconfiguration might be harmless on its own. Combined with outdated software and weak password policy, that low-risk issue becomes the entry point to your entire network. Scanners give each issue a score and move on. They don’t show how those issues connect or what damage an attacker could cause by chaining them together.

Using dark web intelligence to prioritize exposures

Threat intelligence applies frontline incident response and adversary research to discovered assets. Intelligence gathered from these sources creates checks that validate when assets are vulnerable or exposed to exploitation seen in the wild. Partnering with providers that overlay assets with indicators of compromise and deep and dark web monitoring informs security teams of malicious activity involving the brand or an asset before threat actors achieve their mission.

Dark web chatter and leaked credentials reveal what threat actors are preparing to weaponize. To name just one example, attackers setting up infrastructure to mimic your brand represents a high-risk exposure demanding action now. These signals should form part of exposure prioritization.

Security teams using platforms that cut through noise by alerting only on validated, exploitable exposures save valuable analyst time while delivering better security outcomes. Other tools may send 1,000 alerts and force teams to waste time trying to figure out what is real [8]. High-signal approaches allow teams to prioritize efforts and respond faster to genuine threats.

Real attacker intent versus theoretical risk scores

Attackers prioritize reachability and business value, not abstract severity numbers. Defenders who prioritize differently create misalignment between perceived risk and actual exposure. Contextual prioritization answers how dangerous a vulnerability is for us right now, not how severe it is in theory.

Applying threat intelligence to attack surface management requires mapping intelligence to discovered assets. This helps identify and prioritize critical issues or suspicious activity. Considerations to prioritize include asset criticality to revenue streams and brand reputation, threat actor TTPs and targeting patterns, exploitation status in the wild, and organizational risk tolerance. Active campaigns targeting your sector show which exploits are in play now. This context narrows focus to what threat actors care about today.

Building a Robust Attack Surface Management Program

Defining scope and asset inventory

Building an attack surface management program starts with detailed asset visibility. You cannot prioritize what you cannot see [6]. Organizations must identify every potential attack vector within their environment. This includes network devices, endpoints, applications, cloud resources and data repositories. The inventory captures domains, IP addresses, cloud instances, APIs, serverless functions, managed services, SaaS applications and vendor portals across on-premises, cloud and hybrid infrastructures.

The complexity creates serious problems. Security leaders experienced incidents caused by unknown or unmanaged assets, with 73% affected [9]. Shadow IT deployments, legacy systems outside documentation and ephemeral workloads present consistent blind spots. Attack surface management tools discover 30% more cloud assets than security and IT teams even know they have at first [10]. Complete asset visibility provides both horizontal lists of IT assets and deep vertical details for each. These details include hardware specifications, installed software, network connections, approved users, applied patches and open vulnerabilities.

Implementing continuous discovery workflows

Point-in-time assessments become outdated the moment development teams deploy new code or infrastructure changes. Continuous discovery maintains immediate surveillance of networks and systems to identify anomalies without delay. Automated workflows handle asset discovery at scale using API-based scanning, network reconnaissance and certificate analysis. These methods close gaps left by agent-based approaches that miss containerized workloads spinning up and disappearing before installation completes.

Platforms detect when new assets appear, configurations change or systems go offline. Security teams receive alerts to act before attackers exploit these modifications. Organizations should integrate attack surface management with existing security operations tools like SIEM, EDR, ITSM and patch management systems. This integration pushes remediation steps directly into workflows already in place.

Establishing remediation priorities based on threat context

Security teams identify more vulnerabilities than resources allow fixing fast. Risk-based prioritization assigns scores that consider vulnerability severity, asset criticality, exploitability status and business effect rather than CVSS alone. Remediation that works requires mapping issues to responsible teams with specific fix instructions and defined timelines based on risk tier. Organizations working with more than 1,000 third parties must include processes to notify vendors of serious incidents. This prevents cascading supply chain effects [12]. Regular assessments verify new assets integrate correctly and vulnerability assessments reflect current enterprise state.

Selecting the Right ASM Tool for Enterprise Security

Vendor evaluation criteria for 2026

Vendor selection just needs verification across multiple dimensions to confirm they line up with your security requirements and technical environment. The ASM market will grow at a compound annual rate exceeding 30% through 2030. Security leaders must navigate through an increasingly crowded marketplace [11]. Before you shortlist vendors, confirm they meet baseline requirements: continuous discovery cadence with near-real-time detection rather than weekly scans, automated asset attribution that maps ownership without manual classification, exploitability-based prioritization that incorporates active threat intelligence, and cloud API validation that verifies security controls function as configured.

Request trial access or demos that allow your team to test real scenarios with your systems. Customer references help you review accuracy. Check the vendor’s approach to vulnerability verification as well. Solutions that combine automated scanning with human validation deliver more accurate results than purely automated platforms.

Integration capabilities with existing security stack

Attack Surface Management tools must connect smoothly with your SIEM, SOAR, vulnerability management tools and ticketing systems. Native integrations reduce manual effort and enable automated workflows for vulnerability management and incident response. Verify the platform generates standardized logs sent to your SIEM. Check whether DevSecOps workflows can prevent merges when critical vulnerabilities are identified. Ask vendors for API capability documentation and integration partnerships to ensure compatibility with your environment.

Measuring ASM program effectiveness

Security teams calculate their effect through data-driven metrics. Mean Time to Remediation tracks how long teams take to remediate confirmed exposures and identifies workflow bottlenecks. Mean Time of Exposure calculates duration between an exposure appearing and detection. Shorter intervals reduce attacker dwell time. Remediation velocity shows whether security efforts keep pace with evolving threats compared to industry measures. Coverage of asset discovery indicates the percentage of internet-facing assets under active monitoring.

Conclusion

Attack Surface Management has moved from reactive scanning to preemptive security. We’ve looked at why daily scans are no longer enough when threat actors weaponize vulnerabilities within hours. The market leaders deliver an hourly scanning cadence and verified exposures with working proof-of-concept. They also provide novel security research that gives early alerts and high-signal threat intelligence that cuts through noise.

You should prioritize platforms that integrate threat intelligence into exposure prioritization while evaluating vendors. This approach will give your team focus on exploitable vulnerabilities that attackers target rather than chasing theoretical CVSS scores. Choose tools that provide continuous discovery and automated workflows. These tools must integrate with your existing security stack to protect you meaningfully in 2026.

To learn more about Searchlight’s Attack Surface Management tool, book a demo.

[1] – https://www.fortunebusinessinsights.com/attack-surface-management-market-110386

[2] – https://www.grandviewresearch.com/industry-analysis/attack-surface-management-market-report

[3] – https://www.nccgroup.com/the-expanding-importance-of-attack-surface-management-2026-outlook/

[4] – https://outpost24.com/blog/attack-surface-management-predictions/

[5] – https://blog.checkpoint.com/executive-insights/how-threat-intelligence-and-multi-source-data-drive-smarter-vulnerability-prioritization/

[6] – https://www.wiz.io/academy/vulnerability-management/vulnerability-prioritization

[7] – https://blog.qualys.com/product-tech/2018/05/07/how-to-prioritize-vulnerabilities-in-a-modern-it-environment

[8] – https://www.paloaltonetworks.com/cyberpedia/asm-tools-comparison

[9] – https://fortifydata.com/blog/build-a-scalable-asm-attack-surface-management-strategy/

[10] – https://www.netspi.com/blog/executive-blog/attack-surface-management/how-to-use-attack-surface-management-for-continuous-pentesting/

[11] – https://www.paloaltonetworks.com/cyberpedia/asm-tools

[12] – https://www.bitsight.com/blog/5-tips-crafting-cybersecurity-risk-remediation-plan

Exploitability refers to whether a vulnerability can theoretically be exploited, while actual targeting reflects whether threat actors are actively using it in real-world attacks. A high CVSS score vulnerability that attackers never exploit presents less risk than a lower-scored vulnerability actively used in ransomware campaigns. Effective security programs prioritize based on real attacker intent rather than theoretical severity scores.

Point-in-time assessments become outdated immediately when development teams deploy new code or infrastructure changes. Continuous discovery maintains real-time surveillance to identify anomalies without delay, detecting when new assets appear, configurations change, or systems go offline. This approach is essential since attack surface management tools typically discover 30% more cloud assets than security teams even know they have.

Threat intelligence helps security teams focus on vulnerabilities that attackers are actually targeting rather than chasing theoretical risks. By incorporating dark web intelligence, adversary research, and indicators of compromise, teams can identify which exploits are being weaponized in active campaigns. This context-driven approach ensures resources are spent on exposures that represent genuine threats to the organization.

Organizations should prioritize platforms offering hourly scanning cadence for real-time detection, verified exposures with working proof-of-concept to reduce false positives, novel security research providing early vulnerability alerts, and seamless integration with existing security tools like SIEM and SOAR. Additionally, the platform should provide comprehensive asset discovery across cloud and on-premises infrastructure with automated workflows for remediation.

Bio

Lizzie is an experienced IT and cybersecurity marketing professional with six years of specialist experience in the industry. Lizzie produces a range of content – from blogs and long-form articles to newsletters and social media – with a focus on writing that informs and engages technical audiences. With a solid understanding of the cybersecurity landscape, Lizzie brings clarity and credibility to complex topics.